In our experience we’ve found that great customer service is a battle of inches. There isn’t a single magic switch you can flip where you’re suddenly delighting your customers to their heart’s content. We spend a lot of time thinking, learning and experimenting with this, always trying to improve.

Measuring our success (and failures)

One thing that’s very important in order to improve is data. If you aren’t measuring something in a scientific way it’s hard to compare yourself to others and know if you’re improving or not. Along with ongoing NPS (Net Promoter Score) surveys, we also track customer satisfaction for every request in our Zendesk helpdesk – that’s over 2000 new tickets every month

With our helpdesk we’re able to send an automated email to every client who requests support 24 hours after a ticket is solved. Here’s what it looks like:

Since we’ve introduced this system, we’ve taken every “Bad” satisfaction very seriously. We review each incident with the team and figure out what can be done to improve and make sure it doesn’t happen again. One effective process we’ve found is doing the Five Whys Analysis to get to the root cause of the issue. On the surface it can be deceptive about what the real issue is – it’s always a good discipline to drill down.

When we first started using this we were averaging around 95% satisfaction (percent of good vs. bad over a rolling 60 days). After using and learning from this system for a while, our satisfaction score rose, and for the most part, stayed at 99%. Last March we were happy to announce our 99% score against an 85% global benchmark.

We knew we could improve

We were consistently at 99%, but we knew we were far from perfect. We wanted to improve but it’s hard to get much better than 99%. Most of our agents were 100%.

Nick and friends from Zendesk visited our offices when they were here for a bootcamp and we asked them if they had any plans to change the question. What we wanted was a number rating system so the question wasn’t so black and white. We guessed that a lot of the time our clients weren’t giving us good or bad scores because how they felt was somewhere in the middle.

Unfortunately, Zendesk doesn’t have any plan to modify their rating system. Their biggest reason was that companies don’t need it; with a global benchmark at around 80%, there’s plenty of room to improve with the current method.

Change the question

We experimented with other survey tools like Formstack and SurveyMonkey, but kept moving back to Zendesk’s rating system because of how close it integrates with the core ticketing platform. Ratings are connected to tickets and agents which makes it easy to follow audit trails and track progress.

With all these factors in mind, we decided to change the way we asked our clients to rate our support. We changed two things:

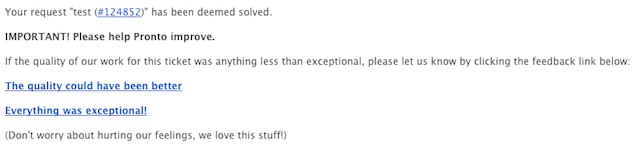

- Time when clients are asked – Instead of an automated email 24 hours after a ticket is solved, the question is presented in the email that notifies our client that the request is solved. We want to know how our customers are feeling the moment they’re reviewing some work we’ve done or a question we’ve answered.

- The prompt text – “Bad” turned into “The quality could have been better”. “Good” to “Everything was exceptional!” Here’s how it looks in the email that gets sent out when a ticket is solved:

Our goal here was to get more “bad” satisfaction feedback. Each time we get this feedback it gives our team a chance to review and improve ourselves and our process. Back to the

Five Whys!

The results

Since we’ve made the change and at the time of this post, our average rating has gone down to 97%. It hasn’t gone as low as we would have liked it to, but this extra feedback has been great for our production teams to work on our process, tools and communication.

One of the biggest changes we’ve made as a result of feedback is the way we communicate internally. We’ve introduced a bunch of new macros that our teams use to spec out common types of requests, like a new page, contact form or blog post. These instruction templates help us make sure everything is accounted for and that work is double checked.

Onward

This recent change has been valuable to us as we continue to measure ourselves and improve our service. We know we still have a long way to go. The team at Pronto has embraced the feedback they get from a Bad rating as an opportunity. People make mistakes, no one gets beat up, it’s the Pronto culture to be open about exploring what happened, and finding solutions. We might discover that “I made a mistake” really means “I need more training”- and that’s something we can address.

If you’re a client, please don’t feel bad to press “The quality could have been better” link when we solve a ticket. It’s your feedback that pushes us to make things better for you. And as always, if you have any other ideas or suggestions, feel free to drop us a line and let us know how we’re doing. We’re always listening!