Every website on the internet wants to be more efficient at achieving its goals – most commonly converting visitors into leads or customers. For a long time, website owners had very little data to help them understand how visitors behaved on their site. Today, there is a huge amount of analytics data on your fingertips to help you make marketing decisions.

This data can help you understand how people arrive on your site, which pages they visit and how they convert into leads. But how do you know if your website could be more efficient at converting those leads?

A/B testing has long been touted as the ultimate way to improve the conversion rate of your website. You’ll often see case studies claiming huge conversion rate increases by changing one small thing on a landing page. There’s no doubt that A/B testing can have a positive impact on your site’s conversion performance, but in many cases, it’s not the holy grail people seem to think it is.

What is A/B testing?

A/B testing involves creating a landing page for a marketing campaign and then creating a near duplicate of that landing page with one small variation. For example, you might change the call to action or the color of a button.

Through tools like Unbounce or Optimizely, the original page and its variation are then each displayed to 50% of the visitors that arrive through the campaign allowing you to compare how the pages perform. After you have enough data, you can determine which page is the winner and then create a new variation to continue testing it against.

Problem #1 – You need a lot of data

A big issue for websites that don’t receive a large amount of traffic is that you typically need a large sample size in order to confident in the data collected. Statistical significance is a calculation used to determine how sure you can be that the data you’ve received is accurate and not just a random fluke. A larger sample size (in this case more visitors to the landing page and its variation being tested) means you can be more confident in your results.

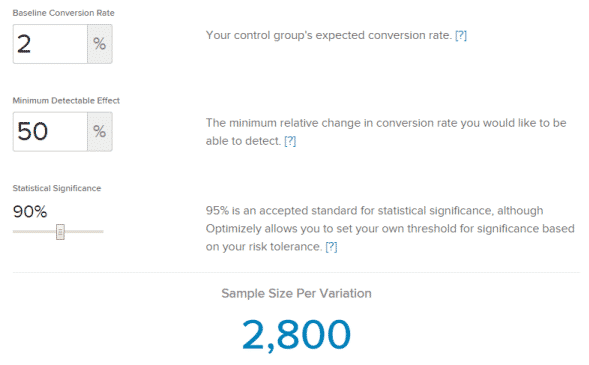

So what kind of numbers are we talking about? Well, let’s say your original landing page currently converts at a rate of 2%. If you wanted to see a 50% increase to that conversion rate (2% to 3%), you would need 2,800 visits in order to be 90% confident in your results.

You can play around with more numbers in Optimizely’s Sample Size Calculator.

For many small businesses, 2,800 visits means multiple years worth of traffic to a landing page. It takes a very large amount of data to make sure your data is accurate.

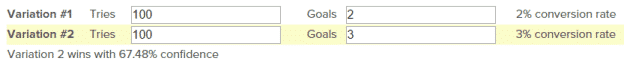

Let’s look at this with traffic numbers that are more reasonable for a small business. If the A and B versions of your landing page each receive 100 visits and Version A receives 2 conversions while Version B receives 3 conversions, you can only be 67% sure that Version B is the better performing page.

That’s not a whole lot better than a coin toss. And considering the statisticians aim for 90-95% statistical significance, you don’t have enough data here to make a well informed decision about your landing page.

Problem #2 – Aiming for the local maximum

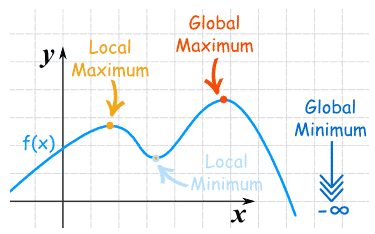

In mathematical functions, the global maximum is the highest value a function reaches across its entire range. The local maximum is the highest value it reaches within a limited range.

In terms of A/B testing, the global maximum would be the best possible landing page while the local maximum is a better version of your existing landing page. When you start A/B testing small variations like headlines, calls to action or button colors, you head down a path towards the local maximum for your landing page, but you might be missing out on an even better page that’s very different for your existing one.

It’s like walking down a street looking for a place to eat. You might end up at the best restaurant on that street, but you probably won’t eat at the best restaurant in town.

So what should I do?

If you’re a running campaign that receives limited traffic, rather spending your time A/B testing, put that effort into building the best landing page you can. Spend time thinking about the pain point you’re trying to address and how you can use that to capture your audience’s attention. Write a strong call to action that will drive your visitors to convert.

Then, let that page run with the campaign for a couple months. If after that time you feel that the page is underperforming, start from scratch and build an entirely new landing page that is completely different from the original. This will give you a better chance of finding the global maximum as opposed to A/B testing which might take months or years to collect enough data.

Keep in mind that the landing page won’t always be the reason your campaign is underperforming. Before creating a new landing page, take the time to analyze other aspects of the campaign. Do your ads need to be rewritten? Does your email need to be redesigned? There might be a simpler solution you can find before building a whole new page.

It’s ok to A/B test

There are still cases when A/B testing is the right thing to do. For example, if you’re running an ad campaign that’s receiving thousands of impressions and dozens of clicks each month, you have enough data to run tests on a regular basis.

Just make sure you know what goal you’re trying to optimize for and how much data you’ll need in order to declare a winner. And in order to avoid getting stuck at the local maximum, throw a radically different variation in the test just to try something new.